Your AI rollout isn't failing because of the technology

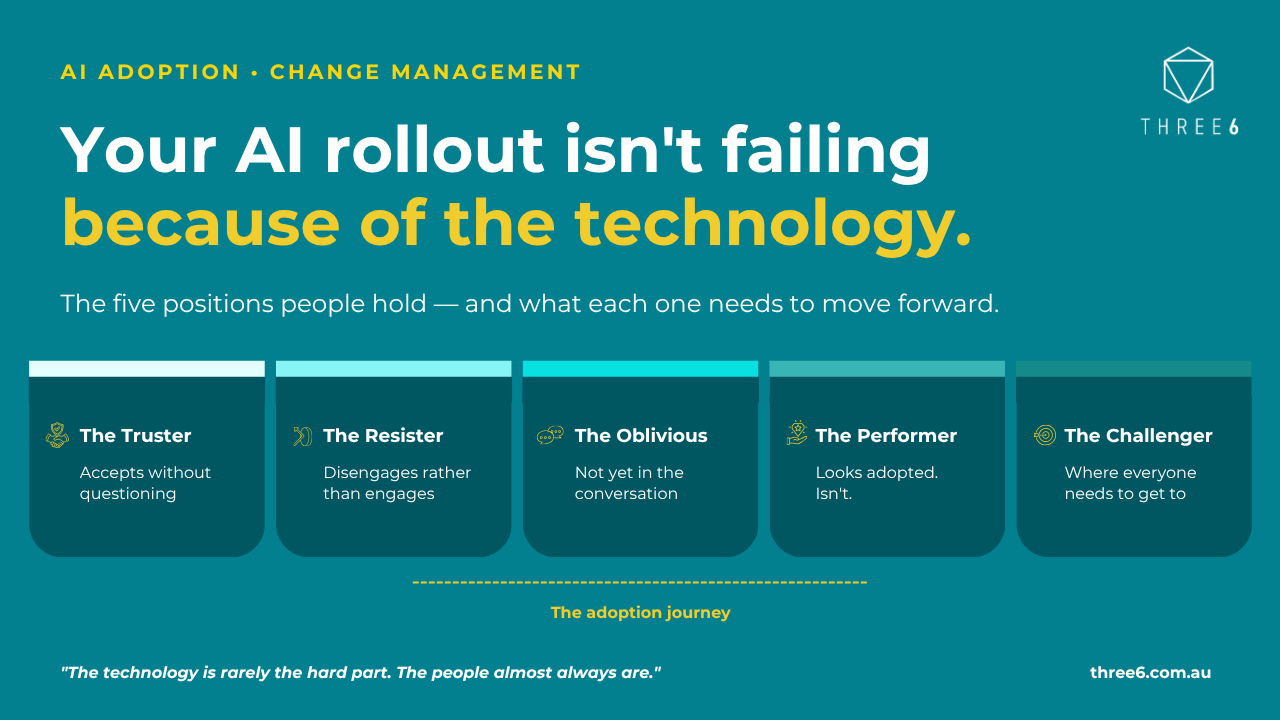

The five positions people hold when AI arrives in an organisation. Understanding where your people are starting from is the first step toward adoption that actually holds.

There's a pattern playing out in organisations right now that almost nobody is talking about honestly, and it tends to follow the same sequence almost every time.

It starts with a policy: governance, security, and acceptable use. That is the right place to start and a necessary foundation. Then comes an announcement about productivity gains and efficiency targets, usually tied to a specific tool that's being rolled out across the organisation. People get access, there's some training, a few pilot teams generate early wins that get shared at the all-hands, and for a while, the momentum feels real. There might be chatbot experiments, a productivity tool embedded into the daily workflow, and some genuine enthusiasm from the people who were already curious about this space. And then, somewhere around the six-month mark, something happens that's harder to name than it is to feel. The usage data looks reasonable. People are logging in. The pilots have been reported as successful. But when you look at actual outcomes, the quality of decisions being made, the work being produced, the time genuinely being recovered, the picture is murkier than the early reports suggested. The gains aren't compounding. Leaders are starting to ask questions that don't have clean answers. And without quite knowing how they got there, the organisation has arrived at a plateau.

This isn't a technology failure. It's a design failure, specifically, a failure to design the adoption for the actual people who are living through it.

The five positions - and why one training approach can't reach all of them

When AI lands in an organisation, it doesn't land the same way for everyone. People are sitting in genuinely different places; different levels of trust, different fears, different relationships with technology and with change, and most adoption programs treat this complexity as if it doesn't exist, rolling out a single training approach and hoping that sufficient enthusiasm from leadership will carry everyone forward.

It doesn't. And the reason comes down to understanding where people are actually starting from.

The truster has embraced AI readily, perhaps too readily. They accept what it produces without interrogating it, haven't developed the habit of healthy scepticism, and are building workflows around outputs they haven't learned to critically evaluate. They're productive in the short term and quietly accumulating risk in ways neither they nor their organisation have noticed yet.

The resister sees AI as a threat to something real, their expertise, their role, their sense of what it means to do good work, and they disengage rather than engage. They complete the mandatory training and return to what they know. They're not obstructing anyone; they're simply absent from the conversation in a way that looks enough like compliance that nobody pushes further.

The oblivious haven't arrived at the question yet. They're doing their job, AI is happening somewhere around them, and they haven't connected it to their daily reality in any personal or meaningful way. They're the largest group in most organisations and the most overlooked in adoption planning, precisely because they don't make noise and don't appear on anyone's risk register.

The performer is the most difficult position to see because it's invisible from the outside. They can speak the language of AI adoption fluently, they show up to the sessions, they say the right things in the right meetings, and in practice, they've quietly reverted to their established way of working. They look adopted. They aren't. And the gap between appearance and reality tends to surface only when you look at outcomes rather than activity.

The challenger is where every organisation wants its people to land. They use AI actively, they question what it produces, they understand where it tends to go wrong, and they've built a relationship with the technology that genuinely enhances their work without making them dependent on it. They're neither credulous nor cynical; they're curious, critical, and getting better at both over time.

The reason most AI rollouts plateau is that organisations design for the challengers and assume the rest will follow. They won't, not because they're incapable, but because each position requires something meaningfully different, and a single adoption program can only really speak to one of them well.

The question leaders always ask here and the honest answer

The response I hear most often when I describe these five positions is: "That makes sense, but we don't have the time or the resources to run five different programs. We have a hundred other priorities and an organisation to keep running."

It's a completely fair point, and I want to give it a real answer.

You don't run five separate programs. You design one adoption journey with enough range in how it's delivered that it can meet people where they are, rather than asking everyone to come to the same place at the same time and absorb the same message in the same way. The distinction matters; one is infinitely more complicated than the other.

The most effective approach we've seen is continuous, bite-sized engagement delivered through the channels and rhythms that already exist in people's working lives, short video snippets shared in the platforms people actually use, five-minute conversations embedded in team meetings that are already happening, peer-led demonstrations rather than formal training, small experiments with real work rather than theoretical exercises. The content is designed to speak differently to different positions without those positions ever needing to be labelled or separated.

We did something similar when we were working with a hospital navigating a major operational shift. The workforce included office staff, surgeons, nurses, and allied health professionals, all with completely different working patterns, different educational backgrounds, different relationships with institutional authority, and different ways of consuming new information. A mass communication strategy would have reached everyone and landed with almost no one.

So instead, we crafted different messaging and different delivery approaches for each group, not different content at its core, but different framing, different formats, different touchpoints designed to meet people in the moments and spaces that actually worked for them. A surgeon isn't going to stop between procedures to watch a twenty-minute training video. But they will engage with a two-minute clinical scenario that's relevant to their specific context, delivered through a channel they already trust. Office staff want to understand the practical implications for their daily workflow, not the strategic rationale. Nurses want to know who to ask when something goes wrong.

The same principle applies to AI adoption. You're not trying to get everyone to the same place at the same time, you're trying to move everyone forward from where they actually are, at a pace and through a medium that makes sense for them.

What underpins all of it

What makes this possible, and what most organisations haven't done before they start designing adoption approaches, is understanding your people well enough to know where each group is sitting. That means listening before designing, which is harder and slower than it sounds when there's pressure to show progress in adoption. But the organisations that do it end up with something the others are still chasing six months into their plateau: genuine, sustained behaviour change rather than usage statistics that look good until someone asks what the technology is actually producing.

The other thing that underpins it, and this is where the technology question connects back to something more fundamental, is having clarity about what you're asking AI to do for your organisation and why. The clearest signal that an AI rollout is designed for technology's sake rather than people's sake is when nobody can answer the question: What experience are we trying to create? Not what efficiency are we targeting, but what does great actually look like for the person doing this work or receiving this service?

When you can answer that question clearly, the adoption design becomes significantly more straightforward, because you're not asking people to adopt a tool in the abstract; you're asking them to move toward a future state that makes sense for them. That's a completely different conversation.

The technology is rarely the hard part. The people almost always are. And the organisations that understand that, early, honestly, before the plateau, are the ones whose AI adoption stories are actually worth telling.

Where are your people sitting right now? Do you know?

Contact us for more information.